Artificial Intelligence: What It Really Is, How It Works, and What We Need to Know

When people talk about AI, they think of ChatGPT or other chatbots — but the reality is far broader than that.

The mainstream media has conditioned us to accept an equation as simple as it is misleading: artificial intelligence equals to chatbots. But the technological reality is infinitely larger, more complex, and more fragmented than that. To use digital tools consciously, the first step you need to take is to dismantle this conflation and map the territory for what it actually is.

"Using the word 'intelligence' for these processes was, from the very beginning, a powerful communicative choice — one capable of evoking a deep analogy with the human mind, but often a deceptive one."

The term "artificial intelligence" is, historically, a label coined by the scientific community to immediately evoke an analogy with what we consider the most precious characteristic of human beings. This choice worked extraordinarily well, but it also generated decades of misunderstanding, leading us to believe that computers possess a living comprehension of the world. In reality, artificial intelligence is a vast field of research that groups together profoundly heterogeneous technologies. Under this broad umbrella we find machine learning, deep learning (deep neural networks), computer vision, natural language processing, and expert systems based on predefined logical rules. The so-called LLMs (Large Language Models) — like ChatGPT or Gemini — are only a very specific subset of this map, not a synonym for the entire discipline.

To navigate media narratives effectively, you need to master one fundamental distinction: that between Artificial Narrow Intelligence (ANI) and Artificial General Intelligence (AGI). All artificial intelligence that exists today — including the kind that writes poetry or generates photorealistic images — belongs to the first category: systems trained to perform specific tasks within well-defined domains. AGI, by contrast, describes a theoretical system capable of performing any human cognitive task flexibly, adapting to ever-new contexts. It is crucial to know this: AGI, as of today, simply does not exist. The scientific community is still deeply divided on how to even define it. And yet the media's portrayal of artificial intelligence frequently plays on the confusion between these two entirely different things.

2. A Longer History Than We Think

Imagine a group of university researchers in the 1950s, convinced they could solve the problem of the human mind over the course of a single summer. This is not a joke: in 1956, during a symposium at Dartmouth College, the founding figures of the discipline — including John McCarthy and Marvin Minsky — genuinely believed that a few months of intense work would be enough to replicate every aspect of human intelligence on a machine. The optimism was almost reckless. And the real world punished them harshly for it.

"The current AI boom is not a sudden act of magic: it is the outcome of decades of research, broken promises, slashed funding, and sudden algorithmic revolutions."

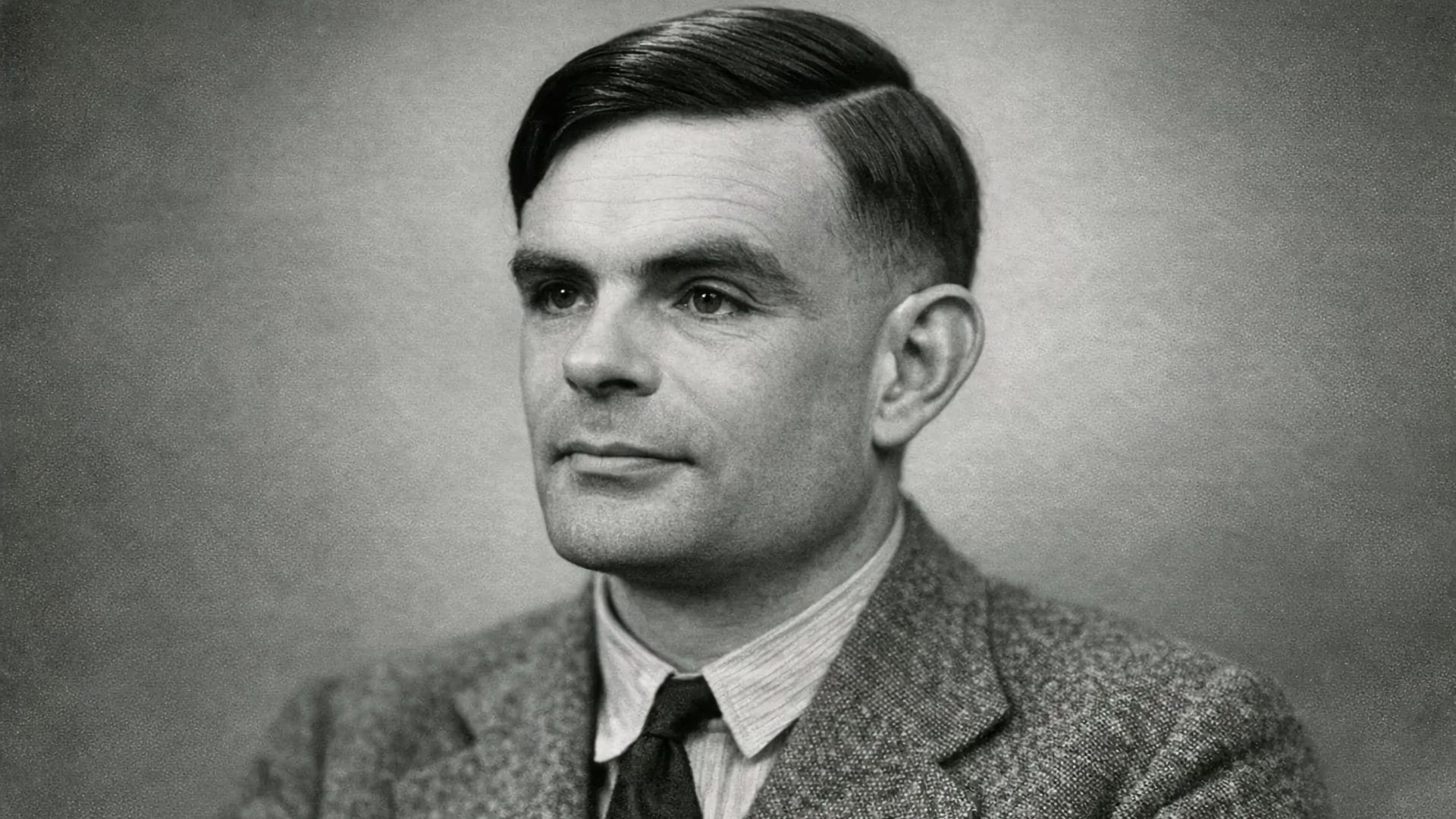

The theoretical roots of the technology that today seems to have fallen from the future actually trace back to 1950, when the British mathematician Alan Turing published a landmark paper proposing his celebrated "Imitation Game": a test to evaluate whether a machine could simulate human thought indistinguishably through written conversation. It was a philosophical provocation that anticipated by decades the problems society faces today. But the distance between the intuition and its practical realization proved to be immense. The complexity of natural language, the ambiguity of the real world, and the limited computing power available led to repeated crises of confidence and drastic cuts to funding — periods that scholars refer to as the "AI winters" of the 1970s and 1980s. Years in which those who continued working in this field were often ridiculed by their colleagues.

The breakthrough came gradually, and required a radical paradigm shift. Rather than programming predefined logical rules, researchers began building architectures loosely inspired by the biological brain, allowing the machine to deduce patterns directly from data. The historical inflection point came in 2012, when a neural network called AlexNet demonstrated unprecedented visual recognition capabilities, paving the way for modern deep learning. But when it comes to language — the fluent responses you read today on your screens — the decisive year is 2017. A group of Google researchers published a paper titled "Attention Is All You Need", introducing the Transformer architecture, which solved the problem of contextual memory in texts: the system learned to weigh the importance of each word relative to all the others in the entire sequence. It is from this specific technical insight that the current generative explosion was born. Technology does not advance through magical leaps, but through patient accumulation and sudden engineering accelerations.

How It Works: Patterns, Weights, and Predictions

When you read a response generated by an algorithm — perfectly fluid, contextualised, produced in a matter of seconds — do you ever wonder what is really formulating those thoughts beneath the surface of the screen? It is a profoundly human instinct to anthropomorphise, to attribute a soul to something that seems to speak to us. But to become conscious users, you need to learn how to open the hood of these machines and look inside without being deceived by the vocabulary.

"Models do not know, do not believe, and do not understand: they recognise statistical patterns and calculate, word by word, which symbol is most likely to come next."

Terms like "intelligence", "learning", or "neural network" invite you to imagine an artificial brain that reflects and comprehends. But the computational reality is very different. Artificial neural networks share with our biological neurons only a vague structural analogy: their mathematical functioning is radically different, and there is no thought contained within the microchips. Imagine the network not as a brain, but as a gigantic system of numerical weights and connections. Each node receives signals, sums them by applying different weights, and transmits the result forward through successive layers.

The lifecycle of these models is divided into two distinct phases: training and inference. During training, the model does not "study" concepts to understand them: it is exposed to enormous quantities of text with one elementary task — to guess what the next word in a sequence will be. If it guesses correctly, the weights of the connections are strengthened; if it gets it wrong, a mathematical formula called the loss function calculates the error and the system recalibrates its numerical parameters. This operation is repeated billions of times, until the model has mapped the statistical relationships between words on an industrial scale.

When you, during the inference phase, ask ChatGPT a question, the system is not "thinking" about the answer: it is calculating the most statistically probable trajectory of symbols, word by word, based on the billions of parameters optimised during training.

A concrete metaphor may help: think of a JPEG file. When you compress an image, you lose the original details but retain a recognisable form — the file contains an approximated representation of reality, not reality itself. Language models do something similar with texts: they compress billions of pages into numerical matrices, losing the original content but retaining a highly sophisticated statistical pattern that allows new and fluent sentences to be generated. The problem arises when you mistake that fluency for understanding.

The Problem of Opacity: When We Don't Know the Why

Would you trust a doctor who prescribes you life-saving treatments perfectly, but categorically refuses to explain the clinical reasoning behind their diagnosis? This is not a thought experiment in moral philosophy: it is a fairly precise description of the most urgent problem our society must face today with artificial intelligence. It is called the "black box" problem.

"Opacity is not a temporary technical flaw destined to be fixed in the next version of the model: it is a structural feature of the way these systems learn."

The shift from artificial intelligence based on traceable logical rules to today's deep neural networks came at a very high cost: the loss of transparency. In a rule-based system, every step is readable and verifiable. In modern neural networks, meaning does not reside in a single point in the network, but emerges from the simultaneous activation of millions or billions of numerical parameters. This means that not even the researchers who developed the system are able to reconstruct, after the fact, the exact logical path that led the machine to generate a specific response. There is a fundamental incompatibility between our human demands for causal explanation and the mathematical nature of the process.

To understand this difference, think of an everyday scene: you come out of a train station and see a group of vendors displaying umbrellas. You immediately infer that it is raining outside. You have found a perfect correlation, and that is enough for you to make a practical decision. But if someone asked you to explain why it is raining, the presence of the vendors would be of no help at all, because correlation does not imply causation. Artificial intelligence works in exactly the same way: it finds extraordinary correlations in data, but it does not know the causes. The problem returns to the doctor metaphor: an algorithm can identify a tumour with greater precision than a human, but it cannot explain the biomedical reason behind the diagnosis. The doctor faces the ethical dilemma of trusting an opaque machine to save a life. The same risk recurs in the judicial sphere, where algorithms trained on past sentences can inherit and amplify historical biases while concealing them behind numerical calculations. Or in access to credit, where an algorithmic red light denies a loan without anyone — neither the bank nor the customer — being able to understand exactly why.

There is a research field dedicated to this problem, called XAI — Explainable Artificial Intelligence — which works to make models more interpretable. Progress is being made, but the structural limitations remain. When opacity becomes embedded in the vital mechanisms of society, human responsibility dissolves into a chain of incomprehensible machines — and this is a problem that concerns everyone, not just software engineers. It is precisely for this reason that in August 2024 the European AI Act came into force: the world's first regulatory framework that classifies artificial intelligence systems according to their level of risk to society, expressly prohibiting applications deemed unacceptable, such as mass facial recognition in public spaces or social scoring systems.

Reasonable Precautions: Neither Panic nor Blind Trust

Faced with such an opaque and rapidly changing landscape, how can you defend your space of critical autonomy? The answer lies neither in a paranoid rejection of technology, nor in the uncritical enthusiasm that leads us to delegate to algorithms decisions that belong to our own lives. The real alternative is to learn to govern the tool, rather than being governed by it. Here are some practical coordinates.

"The danger is not a machine taking control of the world: it is our very human laziness in uncritically delegating thought and responsibility to something that does not understand what it is doing."

When to use it and when not to

The fundamental criterion is to distinguish between a productive context and a learning context. In a productive context — where the objective is the quality and speed of the final output — using artificial intelligence to optimise repetitive tasks or to assist your work is not only a legitimate choice but a rational one. An expert translator who uses software to speed up the technical phase, reserving stylistic revision for themselves, is using technology as an amplifier of their own skills. By contrast, in a learning context — and this applies with particular force in educational settings — the goal is never the product, but the cognitive process required to reach it. When a student delegates the writing of an essay to artificial intelligence, they obtain the result but skip the mental training. Experts call this phenomenon deskilling: the gradual erosion of skills that are no longer exercised. Do not use the machine to replace the hard work that builds your intellect.

How to recognise the limits of an AI tool

The second step is to disengage the psychological tendency — well-documented and very widespread — to trust the machine more than we trust our own judgement. It is called automation bias, and it makes us vulnerable in a subtle way, because the responses of language models always sound confident, grammatically impeccable, and authoritative. You must remember that these systems are architecturally designed to maximise plausibility, not to return objective truth. From this structure arise the so-called hallucinations: fluent but factually false responses in which the model cites non-existent studies, invents dates, constructs plausible quotations from no real source. The system does not lie with intent — it simply assembles symbols into statistically credible sequences that do not correspond to reality. As models become more refined, their errors become more sophisticated and more difficult to expose. Always exercise methodical doubt, and independently verify information you consider important.

What to ask for as users and as citizens

Finally — and perhaps most importantly — remember that digital literacy is not only about your individual behaviour in front of a screen. It is also about your awareness as citizens holding rights within a public space that is rapidly transforming. You do not have to accept that your personal data and your information environment are managed solely according to the market logic of major technology platforms. You can demand and support regulatory frameworks such as the European AI Act, which attempts to place the individual at the centre of this process. You can ask for transparency from companies that use algorithms to make decisions about you — from health insurance to your child's school curriculum. And you can start, tomorrow morning, with something very simple: the next time an artificial intelligence tool gives you an important answer, ask yourself not only whether that answer is correct, but on what basis you are deciding to believe it.

This article is the first in a series dedicated to artificial intelligence on Beyond The Screen.

In the next pieces, we will explore how AI is already entering school life and what practical tools you have at your disposal to navigate it.

If you have questions, doubts, or direct experiences with these topics, write them in the comments: that is where the next articles are born. And if you want to receive new content directly, subscribe to the newsletter — you'll find it at the bottom of the page.